New AI systems that can transform written language into ‘photo realistic images‘ have potential to alter the creative industries and start a new era of machine-made photo appropriation.

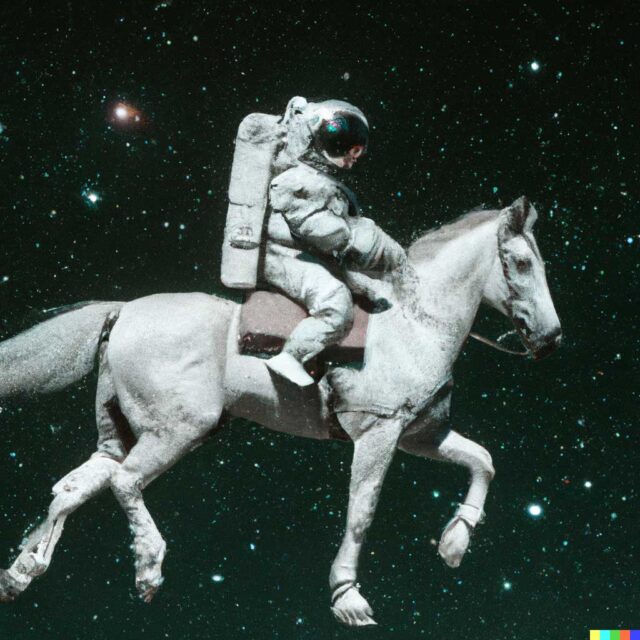

Two major new AI-driven image generations systems, OpenAI’s Dall-E 2 and Google’s Imagen, can generate graphics based off written prompts. If a user wants to see something like, say, ‘An astronaut riding a horse in a photo realistic style’ [above], the Dall-E 2 does a decent job of rendering it.

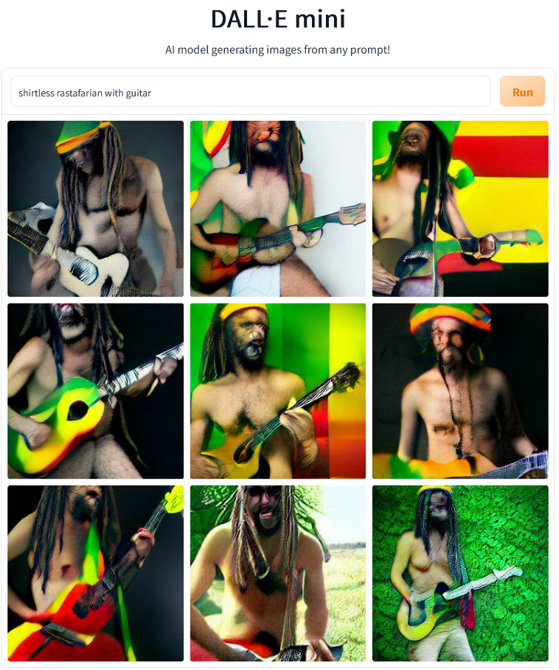

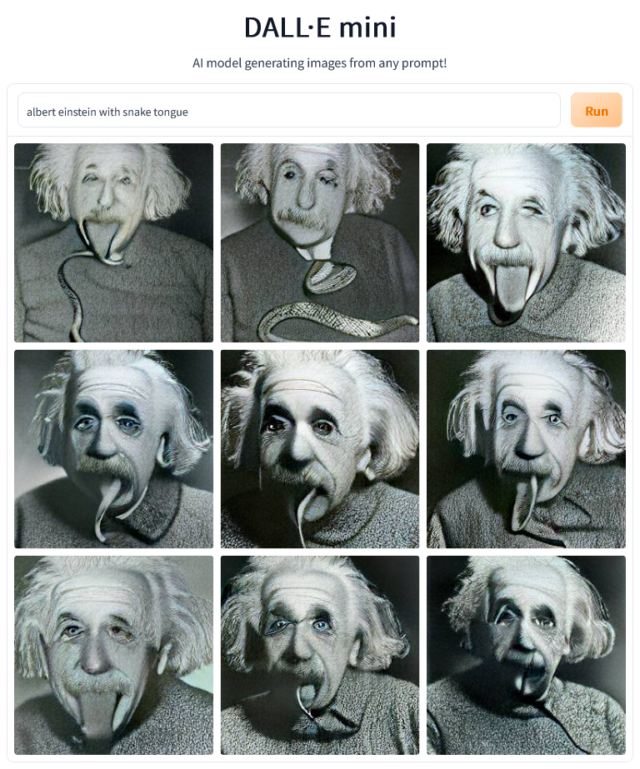

While OpenAI and Google’s advanced new AI system isn’t yet open to the public, a far more primitive and flawed version called Dall E Mini is available.

Dall-E Mini is made by independent developer, Boris Dayma, and isn’t linked to OpenAI. It was named Dall-E Mini in hommage to OpenAI’s original concept, and has since been re-branded as Craiyon at OpenAI’s request. Dayma’s AI system has recently gone viral after producing nightmarish renderings with often extreme distortions which, like the advanced systems, are made by scraping image information from huge datasets.

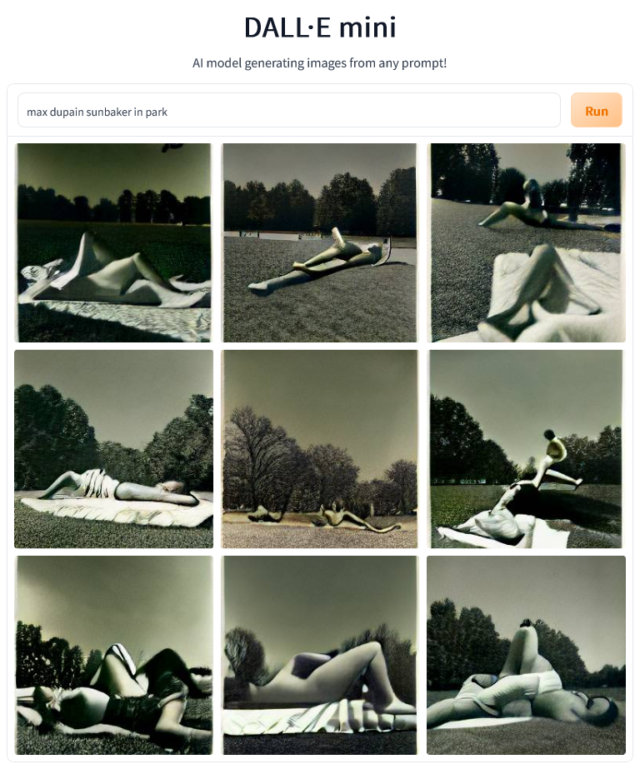

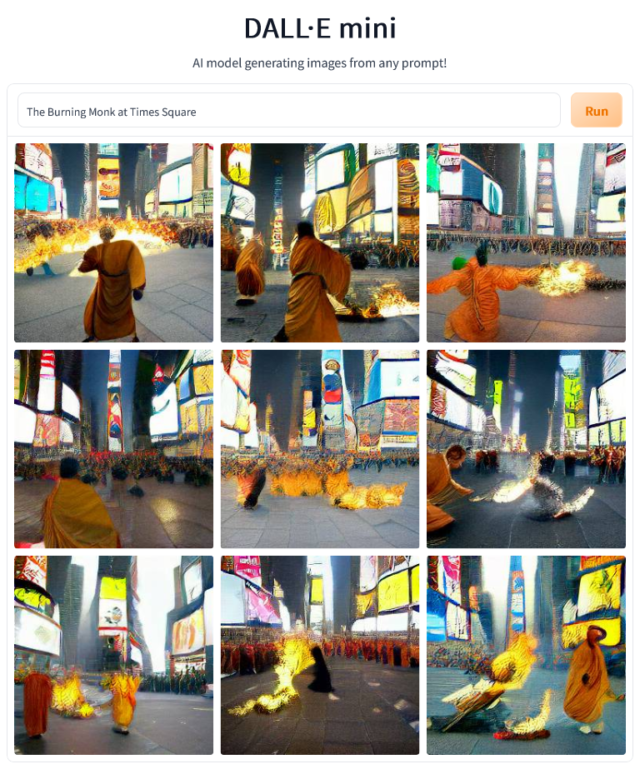

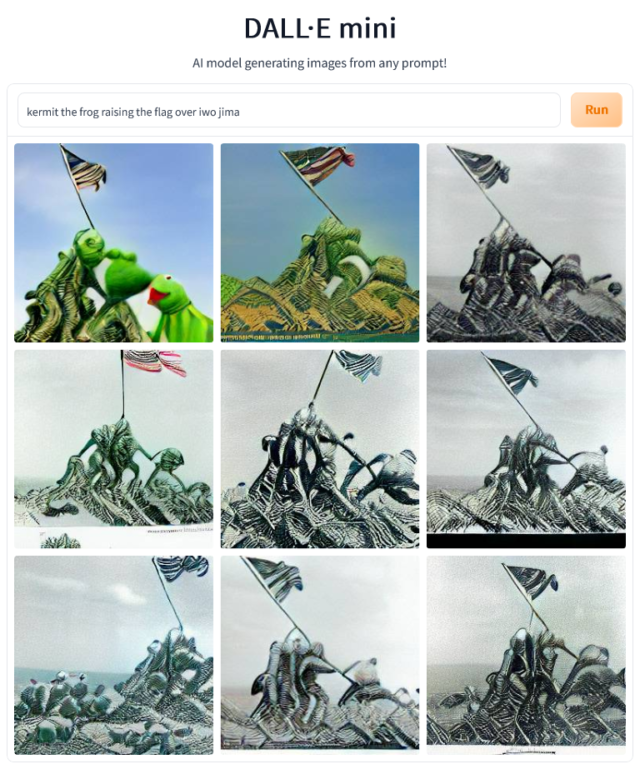

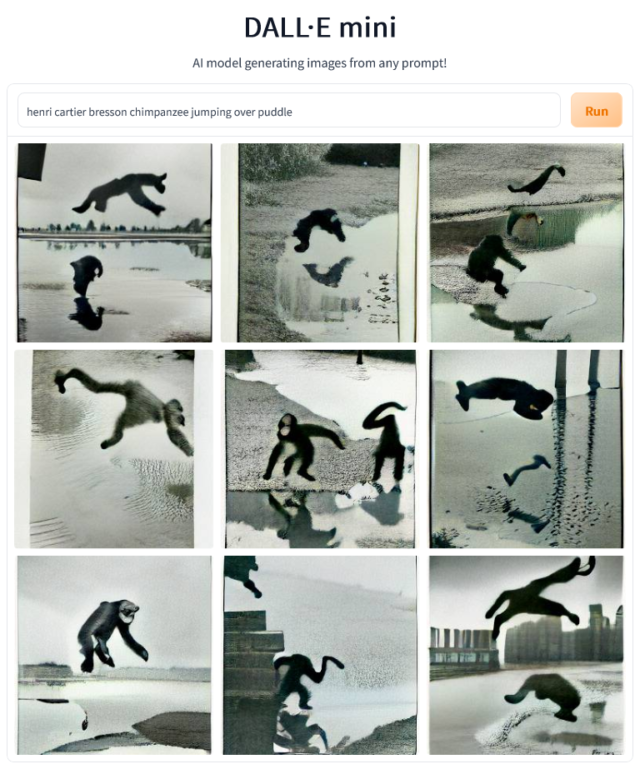

While Dayma’s new ‘robot art’ seems incapable of creating hyper realistic renderings, some of the more creative concepts are quite striking and could easily fit among the freaky art pieces hung on the walls at Hobart’s MONA.

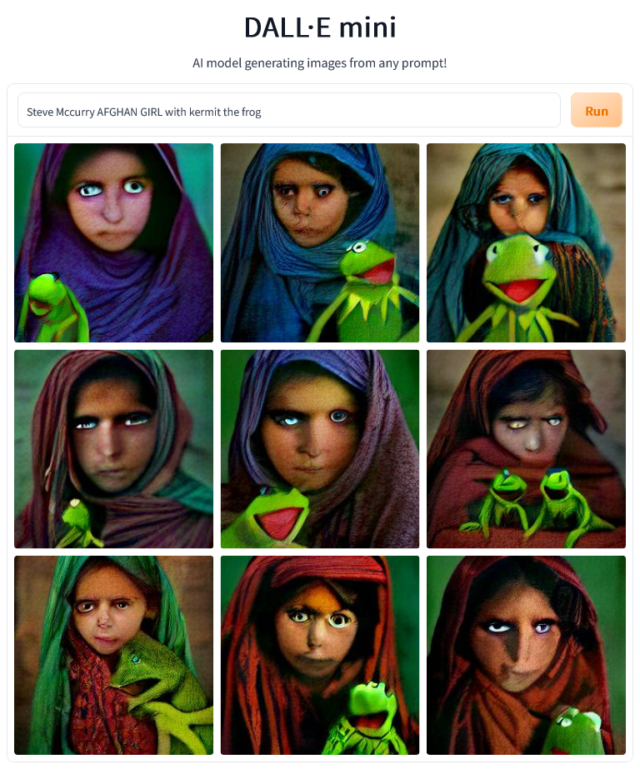

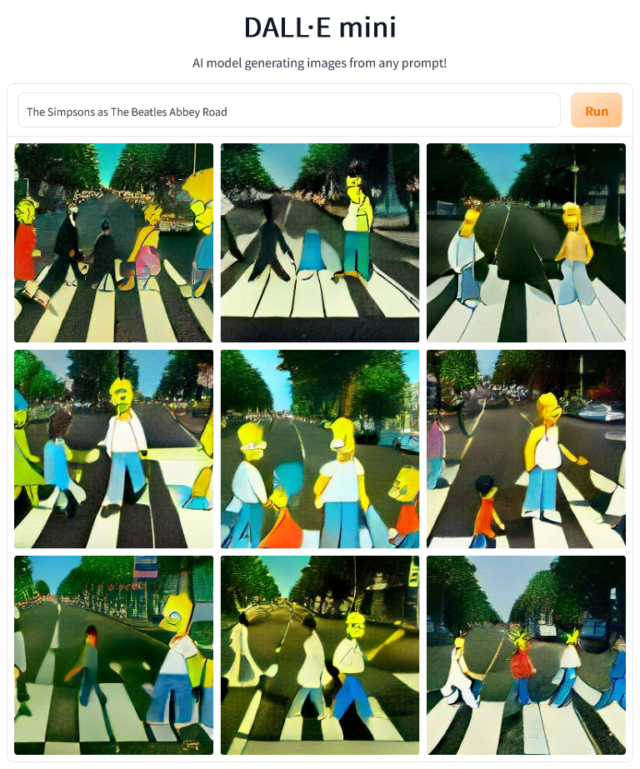

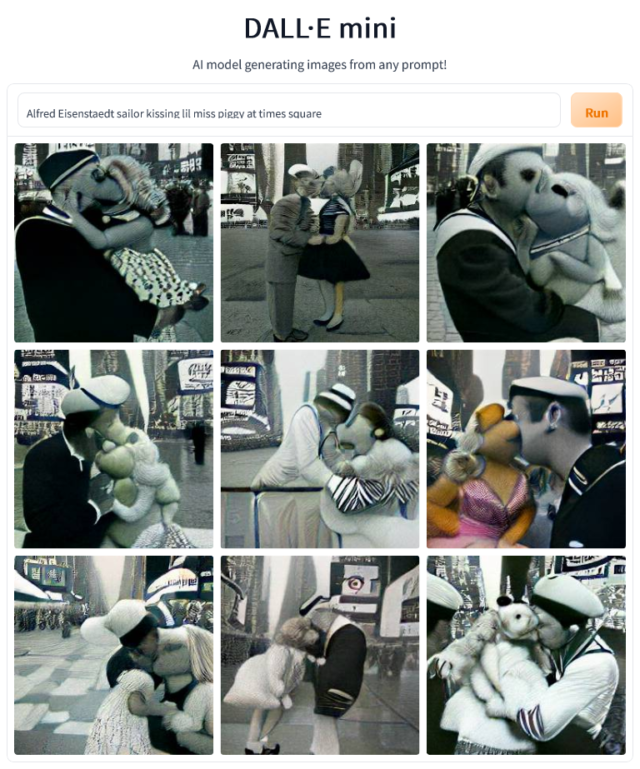

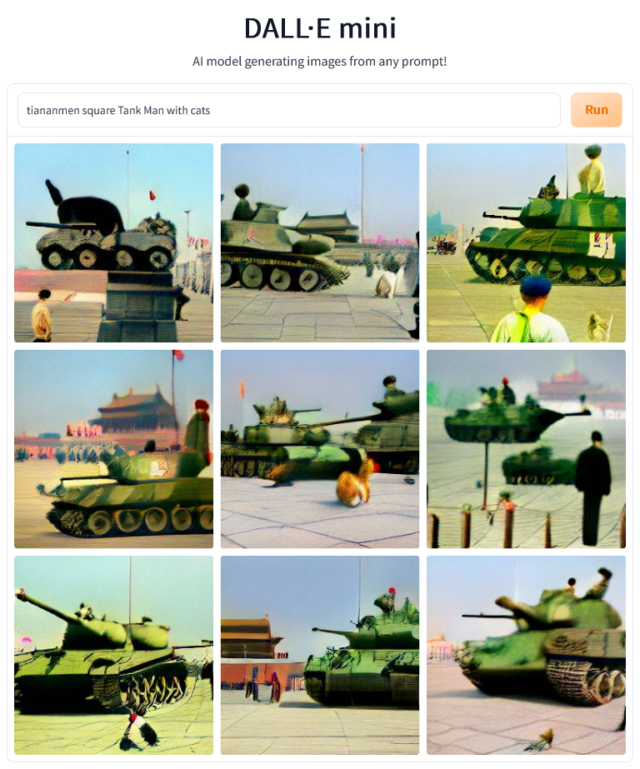

Inside Imaging ran tests to find out if the primitive open source Dall-E Mini could appropriate famous and iconic photos. The kind of work a art appropriator hack like, say, Richard Prince, may create. Scroll to the bottom of this article for the results.

How do they work?

The systems are trained by scanning millions or possibly billions of images and associated key words. This information is collected and catalogued, and is then used to generate new imagery based off a written prompt. For the purpose of this article, we’ll focus on OpenAI’s Dall-E 2, but it appears Google’s Imagen operates in a similar fashion.

The Dall-E 2 system was trained ‘on pairs of images and their corresponding captions. Pairs were drawn from a combination of publicly available sources and sources that we licensed’.

‘Dall-E 2 has learned the relationship between images and the text used to describe them. It uses a process called “diffusion”, which starts with a pattern of random dots and gradually alters that pattern towards an image when it recognises specific aspects of that image.’

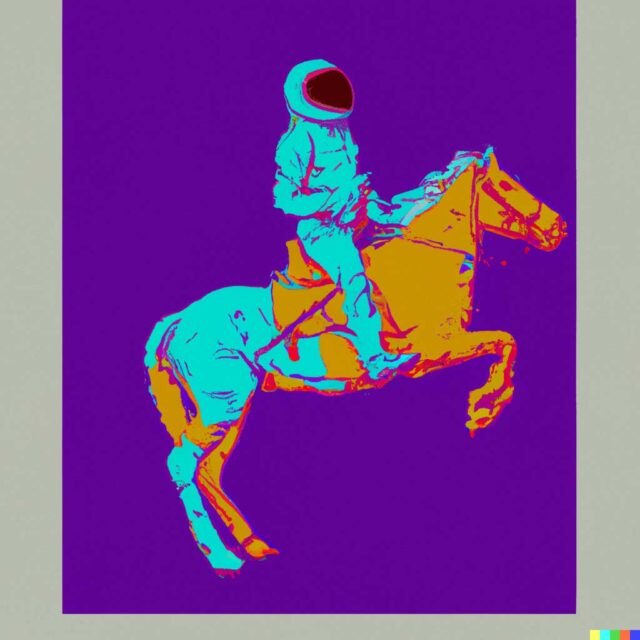

The OpenAI team have showcased a few examples of Dall-E 2 in action and the results are undeniably impressive. The system is capable of generating photo realistic images, as well as stylised graphics such as cartoons, crayon drawings or even specific artists like Basquiat and Andy Warhol.

However, the team undoubtedly cherry-picked the best possible outcomes, and it’s highly likely there are shortcomings and limitations with certain prompts.

OpenAI is ‘highly uncertain which commercial and non-commercial use cases might get traction’, but speculates it could be used for education, art/creativity, marketing, architecture/real estate/design, and research.

Dall-E 2 can also modify an existing image based off a text prompt.

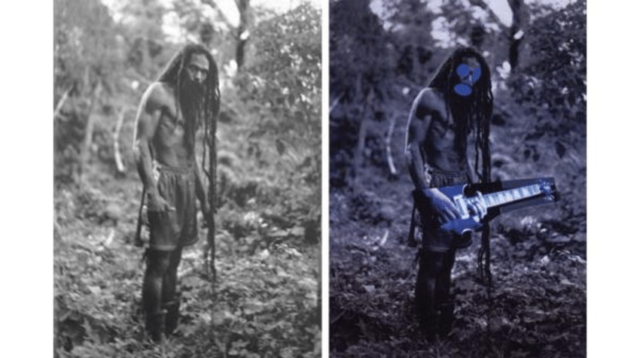

As previously stated, this could be used to generate controversial art similar to that of infamous appropriator, Richard Prince. For instance, just like Prince stole Patrick Cariou’s Rastafarian picture and placed a guitar in the subject’s hands [below], a Dall-E 2 user could source Cariou’s photo and prompt the AI to ‘place electric guitar in subject’s hands’. There is also a broader conversation to be had about how this type-of AI system could impact creative industries, such as graphic design.

New AI-powered image generating systems also create problems in regards to copyright infringement. While Prince’s legal team successfully swatted away Cariou’s copyright infringement lawsuit by arguing the work was transformative and therefore ‘fair use’, it’s unknown who Cariou could sue if the ‘art’ is generated by a program. Would it be the individual writing the text prompt, OpenAI, or the non-human Dall-E 2 system?

‘The model can generate known entities including trademarked logos and copyrighted characters,’ explains OpenAI’s team in an article on Dall E 2 risks and limitations. ‘OpenAI will evaluate different approaches to handle potential copyright and trademark issues, which may include allowing such generations as part of “fair use” or similar concepts, filtering specific types of content, and working directly with copyright/trademark owners on these issues.’ – The Richard Prince defence.

Misuse and abuse

This brave new dystopia of advanced machine-made digital image creation is still a work in progress. The primary reason why it’s not publicly available is because OpenAI and Google are concerned about the systems being misused, abused or weaponised.

A series of safeguards have been implemented and will be refined, OpenAI explains, such as limiting the ability to generate violent, hateful or pornographic images, the overall removal of explicit content, and preventing photo realistic production of real peoples’ faces. Although OpenAI acknowledges the filters have deficiencies – it’s not possibly to predict every way someone could misuse the system.

Google’s Imagen team is also aware of the ethical challenges. For instance, the AI may indeed be racist!

‘The data requirements of text-to-image models have led researchers to rely heavily on large, mostly uncurated, web-scraped datasets. While this approach has enabled rapid algorithmic advances in recent years, datasets of this nature often reflect social stereotypes, oppressive viewpoints, and derogatory, or otherwise harmful, associations to marginalised identity groups.’

While these new AI systems have been refined over several years, they still appear to be in their infancy. It’s anyone’s guess if this technology will be a mere flash in the pan, or a serious new way to generate imagery.

Inside Imaging ran tests to see if the Dall-E Mini [Craiyon] AI system could make decent appropriations of famous photos. Initially we ran somewhat complex tests such as ‘Steve McCurry’s Afghan Girl at McDonalds‘, which didn’t yield the desired results. So we dumbed it down a bit.

Be First to Comment