(…but couldn’t be arsed to ask!)

It’s tempting to view the introduction of 4K technology as just another ‘trend’ like the enthusiasm for 3D a few years ago. But you’d be wrong. While both 3D and 4K were important product differentiators in the TV market and both gained toeholds in stills cameras, 4K offers much more to a wider range of people – and it’s likely to be with us for longer.

Today, 3D has virtually vanished from marketing copy, but 4K appears in virtually every new piece of imaging equipment from smartphones to interchangeable lens cameras. It’s driven by consumer demand for higher video quality, plus an evolution in recording technology that delivers the fast data transfer speeds and high-capacity storage devices 4K depends upon.

The evolution has been quite gradual. It took the introduction of the SDXC family of memory cards in 2009 to achieve storage capacities greater than 32GB and read/write speeds up to 50MB/second. The next step came in 2011, when card capacities rose to 128GB and transfer speeds exceeded 100MB/second. But even then, we had to wait for the UHS-II bus interface to offer speeds in excess of 156MB/second before 4K recording could really take off.

Consumer-level 4K recording found its feet with Panasonic’s Lumix DMC-GH4, the first stills camera able to record 4K video clips in a non-proprietary format that could be edited with most movie editing software. This camera marks the start of the true 4K ‘revolution’.

While primarily designed for recording in the consumer UHD-1 (Ultra-High-Definition) format, with a resolution of 3840 x 2160 pixels and a 16:9 aspect ratio, the GH4 was also the first to support the professional Digital Cinema Initiatives (DCI) resolution standard of 4096 x 2160 pixels, which has an aspect ratio of approximately a 1.9:1. This option is now common in the high-end interchangeable-lens cameras that are being used increasingly for professional multimedia projects.

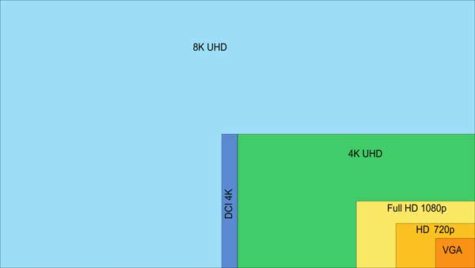

Interestingly, the quest for higher resolution continues; late in 2007, the 8K video format (8192 x 4320 pixels) was standardised by the SMPTE (Society of Motion Picture & Television Engineers) and then accepted as the international TV standard in 2012. Broadcasts in this format began in 2014 with public viewings of the Winter Olympics in February and the FIFA World Cup in June.

Unfortunately, development of cameras has been slow because most networks can’t carry HDTV signals, and because at least 35-megapixel resolution sensors are required for recording 8K footage. This pushes camera prices well above consumer and pro-sumer levels and places constraints on camera designs and construction technologies (keeping sensors from overheating is a significant issue).

Nonetheless, film-makers are increasingly demanding 8K cameras for their ability to capture better 4K footage for movies that will be screened in cinemas. So far only professional cameras have been produced and 8K is not expected to become a mainstream consumer display resolution until about 2023.

A comparison of existing video frame sizes is shown in the diagram to the right:

4K Displays

In parallel with producing 4K content comes the need for screens to display it. This has driven developments in both TV sets and computer monitors, the latter particularly oriented towards video gaming, where high-resolution is demanded by players.

Interestingly, while most TV sets sold are advertised as ‘4K UHD capable’, that’s not what’s actually delivered because networks are unable to deliver reliable 4K data streams. Most Australian free-to-air broadcasters distribute either a 720p HD or 1080i FHD signal into homes.

To view 4K content, a 4K-enabled smart TV with High-Bandwidth Digital Content Protection 2.2 (HDCP) is required. In addition, Ultra HD streaming requires a consistent 25Mbps broadband connection. Without a fast enough NBN plan, for most Australians receiving 4K broadcasts is chancy at best. And even with some plans, streaming can be disrupted during the busy evening hours, particularly when there are multiple users downloading broadcasts via the same hub.

Streaming in Ultra HD also uses a lot of data; roughly 7GB per hour. So anyone watching 1.5 hours of Netflix each night will consume a little over 325GB per month. Add in normal browsing and other downloads and a suitable plan should cover at least 500GB per month.

Current 4K Broadcasts

It is possible to stream 4K video in Australia – but options are limited. YouTube began supporting 4K for video uploads back in 2010 but Netflix was the first to offer streamed access to 4K television and movie content in March 2015.

Rival streaming service, Stan, added 4K content to its service in April 2017, with Amazon launching its 4K-enabled Amazon Prime service in Australia in June 2018, providing streaming through iOS and Android devices. Foxtel began providing TV broadcasts of ‘selected’ sports in 4K on a dedicated 4K Ultra HD channel (channel 444) in August 2018. Broadcast Australia began running trials of 4K transmissions via Network Tens’ free-to-air broadcast channels in mid-2019.

Some services require a satellite connection and a special set-top box to view the broadcasts; others use a regular ‘smart’ TV set with broadband access via the NBN. Streaming quality is usually limited by the NBN connection. With all streaming services, if the connection can’t deliver the best quality stream, it will adapt to deliver the best quality it can, capped at the limits set by the subscriber.

Cameras

Today, 4K video recording has been integrated into virtually every smartphone and camera released, although capabilities vary widely. Most smart devices and consumer-level cameras are restricted to UHD-1 format with a maximum frame rate of 25 fps (PAL) or 30 fps (NTSC). They usually crop higher-resolution frames and often lack manual adjustments, audio input and output and optical zoom.

A few recent cameras – notably Panasonic’s S1, S1R and GH5, Fujifilm’s X-T3 and the Canon EOS-1DX Mark II – support 4K recording at 50/60 fps (PAL/NTSC). But even then they may not have the same capabilities as professional video camcorders, although they are coming close enough for some professional users.

As a recording format, 4K has some significant advantages for photographers:

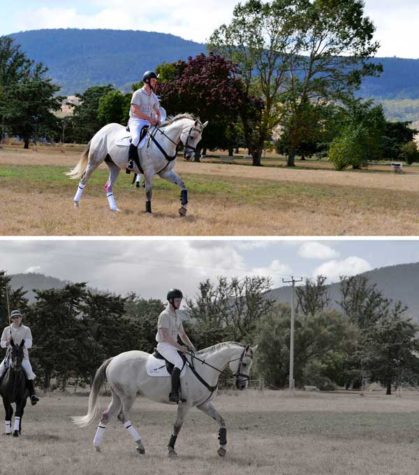

1. With individual frames having a resolution of around eight megapixels, JPEG stills can be extracted for printing at up to A3 size (420 x 297mm). Event, wedding and sports photographers can opt to shoot video, knowing they will obtain printable stills in addition to editable video footage. But they can’t record raw files as movie clips;

2. Recording action as a 4K movie can be better than using a still camera’s burst mode. With a frame rate of 30 frames/second (fps), there are fewer gaps in the action, giving photographers more choice of which frame to use when a still shot is required. Zooms and pans are smoother. Most cameras can record for up to 30 minutes;

3. Even on screens that don’t support 4K resolution, movies captured in 4K will look better than conventional Full HD movies. Downscaling 4K source material to HD resolution effectively oversamples each pixel by a factor of four. This delivers sharper, crisper images with a significant reduction in common video artefacts such as moiré;

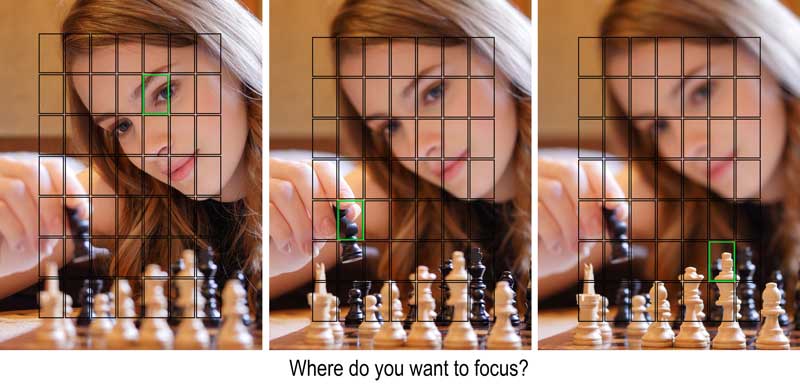

4. Users of 4K cameras can take advantage of special still shooting modes that can anticipate action and allow a choice of focusing position;

5. 4K sequences are stored in one file, making them easy to review;

6. 4K is great for capturing time-lapse sequences.

Downsampling Alternatives

With most modern cameras offering resolutions of 20 megapixels or more, signals from the sensor must be reduced in size before they can be recorded as 4K video frames. Alternatively, the frame can be cropped, with only the centre area used for the video signal. Note: frame cropping is a standard procedure when recording video with a stills camera.

While cropping is simple to implement, it can reduce the effective angle of view of the lens. Some frame cropping may be inevitable, for example when using APS-C lenses on a camera with a 36 x 24mm sensor. But it’s usually considered undesirable.

Subsampling skips multiple sensor pixels when reading out the image data. It’s commonly used in image re-sizing in both cameras and software. Downsampling integrates clusters of pixels to reduce resolution. Both are relatively popular since they retains the full image area while reducing the amount of data to be transferred and enabling higher camera frame rates.

Downsampling is preferable since it works upon all the image data, which means quality may actually be improved. Colours are also fully maintained, although some minor artefacts can be introduced when signals from very high-resolution sensors are downsampled.

Pixel binning also combines the signals from a cluster of pixels, either adding or averaging them to produce a single value. It requires a lot of processing power and sophisticated algorithms but reduces the amount of data to be transferred and enables higher camera frame rates. Each frame has a lower resolution but the camera records the same field of view as the full-resolution image.

The outcomes of pixel binning depend on the algorithms used. If the pixel values are added, the image brightness increases, whereas if they are averaged, image noise will be reduced. Both can be useful when shooting in low light levels.

Unfortunately, few manufacturers explain how their cameras go about adjusting the data stream from their image sensors for video output. However, it’s become common for claims of ‘full pixel readout without pixel binning’ to be a key part of promotional materials, without any explanations of why this should be advantageous. One can only assume their downsampling algorithms have been fine-tuned to a high degree and their image processor chips are capable of handling large amounts of data in very fast streams.

Professional 4K Recording

While the ability to record 4K movie clips is almost ubiquitous, only a few cameras can capture professional-standard footage. Key requirements include support for at least 50fps/60fps frame rates, professional-level time coding and dedicated video profiles.

They should also be able to record high-bit-rate video to external devices and support 10-bit depth using 4:2:2 colour subsampling. For detailed information on colour subsampling visit https://en.wikipedia.org/wiki/Chroma_subsampling.

Recording 4K at 10-bit 4:2:2 in high-quality intraframe encoding takes up approximately 880 megabits/ second – or nearly nine times as much data as most cameras’ internal recording systems can handle! An external recorder is required to handle such a large data stream.

‘Flat’ picture profiles were introduced to enable photographers shooting with interchangeable lens cameras to record footage that could be easily integrated into a professional video workflow. It’s a consumer-level response to the more professional ‘Log’ recording, which encodes the video signal to redistribute tonal levels closer to how our eyes perceive them.

Log recording can increase the dynamic range in the recording by up to 12 stops, in contrast to a maximum of 10 stops for most default photo styles. More details are retained in the shadows and, at the same time, highlights can be preserved, resulting in a more realistic rendition of tones.

Each manufacturer has its own proprietary Log profile. While they are all named differently – C-Log, V-log, S-Log, etc – they all have the same purpose. When using one of these Log profiles the image will look flat and desaturated. This is ideal for post-production editing, since both contrast and saturation are easy to fine-tune at the editing stage, when colour grading is applied.

4K PHOTO Modes

Panasonic pioneered the use of video recording for stills photography in its 4K PHOTO modes. These modes recorded a sequence of 8-megapixel frames using the camera’s video capabilities. But unlike video recordings, users could choose different aspect ratios, achieved by cropping the frame.

Initially there were three modes: Burst, Stop/Start (S/S) and Pre-Burst. The Burst setting simply recorded a 4K sequence at 30 fps while the shutter button was held down. The Stop/Start mode started recording at the first press of the shutter button and stopped at the second press, enabling longer recordings. The Pre-Burst mode started recording when the shutter button was half-pressed and saved all the frames from one second before the shutter button was fully pressed until one second after.

Post-capture focusing has been added to the 4K recording options. In this mode, the camera records a sequence of frames, making subtle adjustments to the plane of focus between frames. Photographers can playback the recorded sequence (usually about one second) and select the frame that shows the focus to be where they want it.

An increasing number of cameras with this shooting mode also support in-camera focus stacking, which combines the sharpest areas from each frame into a single JPEG image that appears sharp from its nearest point to the most distant. This mode is particularly useful in macro photography, where depth of field can be difficult to obtain.

These modes continue to evolve and have begun to support higher resolutions. On the release of its first cameras with ‘full frame’ (36 x 24mm) sensors, the S1 and S1R, Panasonic also introduced a new ‘6K’ recording mode that recorded video footage at 25 fps mainly for stills output via a ‘6K PHOTO’ mode. Each frame was equivalent to an 18-megapixel photo.

While the 4K PHOTO mode supports all of the aspect ratios the camera offers, with 6K recording, choices are limited to the 3:2 and 4:3 aspect ratios, resulting in frame sizes of 5184 x 3456 pixels and 4992 x 3744 pixels, respectively.

Although 6K is currently the upper limit for resolution, it’s unlikely to remain that way indefinitely. As camera resolutions increase and the power of processing chips and algorithms and the speeds and capacities of memory cards expand it’s quite possible for 8K PHOTO modes to be introduced in coming years. That will be something to watch out for!

– Margaret Brown

Be First to Comment